Modern large language models are powerful, but here's what nobody tells you. The gap between a demo that impresses your VP and a production system that consistently delivers value is wider than it looks. You can't ship a prompt and hope for the best.

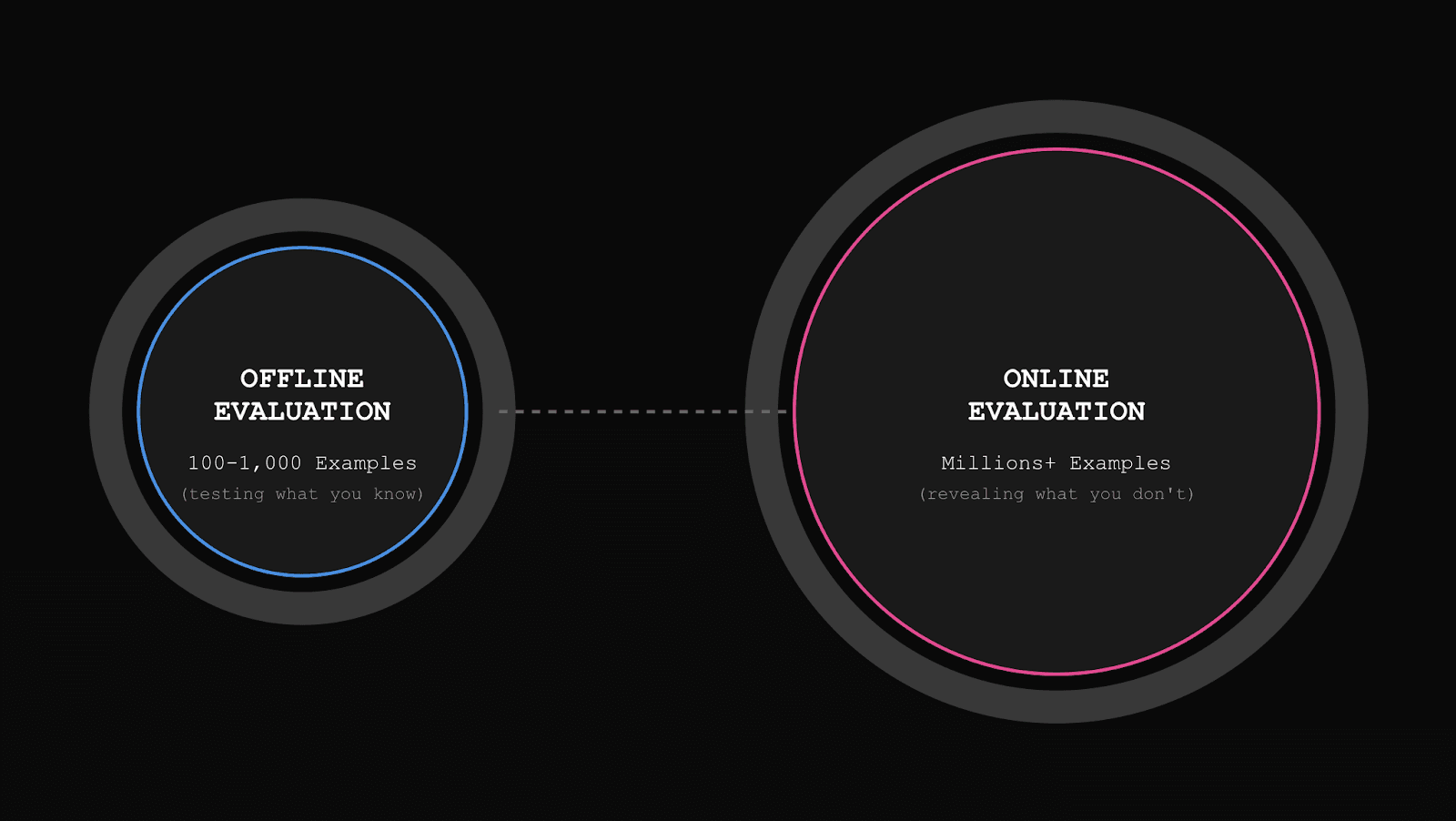

LLM evaluation is how you measure whether your model's outputs actually work for your specific use case. This is where most teams get it wrong. They think evaluation is just about running static test datasets before deployment. That's only half the story. Effective LLM evaluation requires both offline evaluation (testing against curated datasets during development) and online evaluation (continuously assessing real production traffic). To understand how these fit together in your development process, see our guide on the new AI development workflow.

To establish a solid production-ready system, you'll want to build up a set of both online and offline evaluations over time. These will help you monitor production quality, inspect errors, and then test any changes you make to your system before you ship it. This guide shows you how to evaluate LLMs both offline and in production, choose evaluation metrics that matter for your specific use case, and build an evaluation framework that helps you ship confidently and improve continuously.

What Is LLM Evaluation and Why Does It Matter

LLM evaluation systematically measures whether your model's outputs meet your requirements. The core question: "Is this output good enough?" Answering that question often involves nuance specific to your product.

Evaluation happens in two critical modes:

Offline evaluation involves testing your model against curated datasets with ground truth examples during development. You know the inputs, you know what good outputs should look like, and you measure how your model performs relative to those expectations. This helps you assess performance on examples you've prepared.

Online evaluation (production evaluation) involves continuously assessing real traffic after deployment. You sample production logs, score them against your quality criteria, and compare those scores to your offline expectations. This shows you how your system actually behaves with real users, and there's almost always a difference.

Why both modes matter:

You can't improve what you don't measure. Without systematic evals, you're flying blind. For some LLM applications, production behavior bears little resemblance to development behavior. This means that your model might shine on carefully curated test data, then struggle when real users interact with it in unexpected ways. Only production evaluation reveals this gap.

Evaluation uses different approaches that work together:

Human review and labeling remain fundamental for both contexts

LLM-as-a-judge scoring provides a scalable way to evaluate qualitative aspects

Custom code and heuristics catch specific issues (like regex checks for formatting)

Traditional NLP metrics like precision, recall, F1 score, ROUGE, or BLEU (Bilingual Evaluation Understudy) can be useful for specific tasks, like categorization tasks

Each has its role. The key is finding the right mix for your use case, and no matter what, you need human review. Automation helps you scale, but human judgment enables you to decide what to measure, catch nuances, and discover issues you aren't measuring yet.

Common Challenges in LLM Evaluation

Every team building production AI systems hits the same walls. Let's discuss what actually hinders progress.

Curating good datasets and ground truth examples takes significant work. You need test data reflecting production distribution, but creating this is time-consuming and requires domain expertise. For some use cases like translation, there's a clear right answer. For others, like news summaries or customer service, even human experts might disagree about what "good" looks like.

Most development teams have never done LLM evals before. Traditional engineers know how to write unit tests, but LLM evaluation involves unfamiliar processes. They don't know where to start, what tools exist, or how to measure subjective quality. The learning curve is steeper than it looks.

Deciding what to measure is genuinely difficult. What constitutes "good" for your specific use case? A human expert might immediately have a clear sense, but articulating the evaluation criteria they use to judge quality is hard. Turning those into a system others can use, or that can be automated with LLM judges, requires deliberate thought and iteration.

Manual eval doesn't scale, but you still need it. Human review gives you the most accurate signal, but it's slow and expensive. You need fast feedback without waiting days for review cycles. But you also can't fully automate away human judgment for nuanced quality dimensions, especially when validating that your automated evals work correctly.

The industry pushes universal metrics that might not matter for your use case. The AI community has created dozens of eval metrics like "toxicity" or "hallucination" and industry LLM evaluation benchmarks like MMLU and HumanEval. But just because a metric exists or a benchmark is popular doesn't mean it's relevant to what you're building. We've seen teams track metrics that look impressive but have zero correlation with actual product quality.

The gap between offline and production performance is often larger than expected. You might achieve 95% success on your curated test set, then discover you're at 70% in production because real users interact with your system in ways you didn't anticipate. Without production evaluation, you'd never know this gap exists.

Choosing The Right LLM Evaluation Metrics

Most LLM eval guides make this part seem easier than it is. We're not going to lie, it takes some intuition and iteration. That said, here are some pointers we've seen work in practice.

There is no universal set of LLM evaluation metrics that every team should track (other than cost and latency, and those aren't "evals"). The evaluation metrics that matter depend entirely on what you're building and what "quality" means in your context. We're being contrarian here: chasing standardized benchmarks and generic evaluation frameworks often leads teams away from measuring what actually matters.

The worst example? LLM evaluation tools that offer just three generic metrics for every use case: Hallucination, Toxicity, and Bias. These might matter for some products, but they're not actionable for most teams and don't capture the specific quality dimensions that differentiate your product.

What makes evaluation metrics actually useful

Good eval metrics share three critical characteristics:

They're actionable. If your metric detects an issue, your team should be able to imagine concrete ways to fix it. Vague quality scores that don't point to specific problems aren't helpful.

They matter to your users. It's easy to measure things that don't actually impact user experience. Your metrics should capture dimensions that, when improved, make a real difference to the people using your system.

They're relevant to your product. Generic eval frameworks written by other teams might not apply to your specific use case. Your customers care about different things than someone else's customers.

For more on this process, check out our guide on defining the right evaluation criteria for your LLM project.

Clues to Discovering the Right Metrics

While we at Freeplay don't believe in prescriptive metric lists, the evaluation process should focus on high-level indicators that almost every team benefits from tracking. Once you start thinking in these terms, you might discover additional metrics that matter. The areas we recommend focusing on are:

Positive output quality metrics that track whether the right thing is happening, e.g., "completeness" to describe whether each part of a user's question is addressed in an answer

Negative quality or behavioral metrics that track whether some known bad thing is happening, e.g., "prompt injection attempt" to describe whether a user query is trying to hack your prompts

Neutral metrics that help you make sure your application can work correctly, like whether formatting for a response matches an expected pattern to display in your UI

Different Approaches to LLM Evaluation

LLM evaluation methods require different tactics depending on whether you're evaluating LLMs offline (during development) or online (in production).

Offline Evaluation: Testing Before You Ship

Offline LLM evaluations involve testing your model against curated datasets with known ground truth. The evaluation process:

Curate a representative dataset that includes real examples from production (if you have them), plus edge cases you've identified. If you don't have production examples yet, write your own for examples you'd expect.

Define ground truth for your examples — Don't overthink this. Just write the answer you'd hope to see.

Run your model against the dataset — generate outputs for each input in your test set.

Score outputs against your criteria using human review at first, and mark whether you think something is better/worse in one column with a note about why in another column. Then add automated scoring as you identify important metrics.

Iterate until performance is acceptable based on where your model struggles.

Use offline evals as regression tests — run them again on every significant change before deploying.

Offline evaluation strengths: Fast feedback loops, controlled environment, reproducible, can test edge cases deliberately, can test different models easily

Offline LLM evaluations limitations: Only test examples you thought of or observed in the past might not reflect real production distribution, and can't capture emergent usage patterns

For more on integrating testing and evaluation into your workflow, check out our guide on prompt engineering and testing for product teams.

Online Evaluation: Monitoring Production Reality

Online evaluation continuously assesses real traffic after deployment. The LLM evaluation methods for production:

Sample production logs systematically using strategies like random sampling.

Apply automated scoring to samples using the same evaluators you developed for offline eval whenever possible.

Create monitors and alerts for key metrics that matter.

Route samples for human review — when you detect errors that need human attention, feed them into review queues.

Feed insights back into offline evaluation — when you discover issues in production, add those examples to your offline evaluation dataset.

Online evaluation strengths: Reflects real usage, catches unexpected issues, validates offline assumptions, enables continuous monitoring

Online evaluation limitations: Slower feedback (can't iterate as quickly), noisier signal, harder to control variables

Implementing an LLM Evaluation Framework

Building an evaluation framework for your LLM app requires thinking about both offline and online evaluation from the start.

Start with logging and observability

Before you can do any evaluation, you need realistic data. Instrument your LLM application to log:

User prompt and model's performance outputs

Model configuration (which model, temperature, prompt template)

Timestamp and session identifiers

Any intermediate reasoning or tool calls for agent systems

Human feedback signals when available (thumbs up/down, corrections, etc.)

Production data metadata that helps you filter and sample

Without good LLM observability, you'll quickly end up stuck and looking at unrealistic data you imagine will come from your system. You need this data both to create representative offline test sets and to enable online evaluation.

Build offline evals into your development workflow

Create and maintain offline test datasets:

Start with manually crafted examples based on expected use cases

Add real production examples once you have them to make your test set more representative

Include edge cases discovered through production monitoring

Document ground truth for each example

Version your test datasets so you can track how eval expectations evolve

Integrate evaluation into your workflow: When you modify a prompt template, change a model, or adjust agent logic, run your offline eval suite before deploying. This gives you immediate feedback about whether your change improved things, made them worse, or had no effect. Over time, you'll want to automate your evals as much as possible, so this can happen fast.

Implement online evaluation and monitoring

Set up automated sampling and scoring:

Define sampling strategies for different purposes

Apply automated evaluators to sampled production logs

Store evaluation scores alongside production data for analysis

Create dashboards showing trends over time

Create human review workflows:

Define which production samples need human review

Build review queues where domain experts can efficiently review and label outputs

Use insights to validate that automated scoring aligns with expert judgment

Feed human-reviewed examples back into offline test sets

Compare offline expectations to production reality: Regularly analyze how your production evaluation results compare to your offline evaluation test results. If there's a significant gap in model responses, your offline test set might not be representative, user behavior may have evolved, or edge cases in production data might not have been covered in offline evaluations. Update your offline test sets based on these insights.

One common use case benefiting from both offline and online evals: determining which model works best for your specific use case. Test different models offline first, then validate in production that the winner actually performs better with real traffic. Learn more in our guide on how to pick the best model for your LLM app in under 5 minutes.

Pro Tip: Balance rigor with velocity

There's genuine tension between thorough evaluation and shipping quickly. Perfect evals are impossible. The goal is to build enough confidence to ship safely while maintaining the ability to iterate quickly.

For offline evaluations, start with simpler approaches and add sophistication as needed. If you catch 80% of serious issues with straightforward metrics, that's probably good enough to ship.

For online evaluations, you don't need to score every production output on every dimension immediately. Start by monitoring your highest-priority quality metrics and expand coverage as you learn what matters most.

The teams that succeed are the ones that systematically learn what quality means for their product in both development and production, building evaluation systems that reflect that understanding and improve over time.

Freeplay: Your Partner for Accurate LLM Evals

Building evaluation systems can be hard work. You know your product needs rigorous evals. This includes both offline testing before deployment and continuous monitoring in production. But the manual effort required (defining quality criteria, curating datasets, aligning LLM judges, building review workflows) is real, and most teams don't have the bandwidth to tackle it alone.

Freeplay gives you an integrated platform for the complete evaluation feedback loop: creating test datasets, running batch evaluations, aligning LLM judges with human judgment, monitoring production quality, and managing human review workflows. The platform was built to help you spend less time building eval infrastructure and more time actually improving your product.

Teams like Postscript use Freeplay to run dozens of custom evals before deploying prompt changes to thousands of e-commerce brands, ensuring they never break trust with merchants. Help Scout achieved 75% cost savings and shipped AI features faster, with one engineer getting their RAG system tested in 2 hours using Freeplay instead of the 2 days it would have taken otherwise.

Ready to get started? Explore our evaluation documentation or reach out to our team to discuss your specific use case.

Final Thoughts on LLM Evaluation

The teams that succeed with AI aren't the ones with perfect evaluation systems. Instead, they're the ones who systematically learn what quality means for their product and build practical ways to measure it in both development and production. Start with simple approaches, learn from real data, and progressively improve your eval systems over time.

With evals, the goal isn't to generate impressive metrics for stakeholders but to gain the confidence needed to ship improvements and catch issues before they reach users. For more guidance on how to build better AI products with evals, see our resources on building eval suites that work and creating trustworthy LLM judges.

FAQs About LLM Evals

What is the difference between automatic and human evaluation?

Automatic eval uses code, rules, or other AI models to assess outputs without human involvement. It's fast and scalable, but limited in what it can measure reliably. Human evaluation involves people reviewing outputs to judge quality, capturing nuanced dimensions that don't scale well but provide the most accurate signal. The best practical frameworks use both strategically: automated evaluation for speed and scale, with human evaluation for validation and nuanced judgment.

When should you use LLM-as-a-judge?

Use LLM-as-a-judge when you need to evaluate semantic quality dimensions difficult to capture with simple rules but where you can define clear criteria, like relevance, tone, or coherence. It works in both offline development and online production contexts. Always validate your LLM-as-a-judge against human evaluators periodically, using an alignment process to ensure they're measuring what you think they're measuring.

Can evaluation metrics detect hallucinations?

Only when you have a known ground truth can you compare against it directly. Otherwise, you can check whether outputs cite sources that actually exist, whether claims can be verified against the provided context, and whether responses contain contradictions. Metrics like "groundedness" or "answer faithfulness" approximate hallucination by looking at cited materials. The most reliable hallucination detection combines automated evaluations with human review of samples, especially for use cases where hallucinations would be particularly harmful.

What makes an evaluation reliable and reproducible?

Reliable eval means your metrics consistently measure what they're supposed to measure in both offline and online contexts. Define clear evaluation criteria, use consistent data and prompts, and validate automated metrics against human judgment regularly. Reproducibility requires documenting your eval methodology, using versioned datasets and prompts for offline evaluation, and having clear sampling and scoring procedures for online evaluation.

First Published

Oct 23, 2025

Authors

Sam Browning

Categories

Industry